As a consultant, you experience different environments, with different architectures, different skill sets, and different processes. Usually there’s just one thing in common: They have serious issues that need immediate attention. Such as using linked servers in SQL.

Once a consultant has some experience under his or her belt, one can usually see symptoms that can be validated quickly while engineering a solution to get the client back on track. Other times, even the most experienced professional encounters the strangest things and is propelled into a warped, upside down environment. Even though this realm is without logic, you still have to find the root issue and solve it.

Just The Facts

For this edition of the series, the client resembles the old “Dragnet” show, so we’ll refer to the client as “Friday.” Friday has an enterprise environment that separated their development/staging and production environments with a separate DMZ. The Friday team had been working on an internal application that they promoted to the production environment after many months of development and staging approval.

The application was functional and, while not blazing fast, did meet their committees’ threshold for responsiveness in the validation stage. Friday went through a normal change control process to propagate this app to production. But, as soon as they put it in production, the application took more than 17 times longer to run. That’s when it actually completed, or otherwise it would simply stall out.

Friday re-verified the production code was the same code in staging. The servers in production were the same class of servers in dev/staging. However, the production servers had at least twice the amount of resources and usually more. In other words, if staging had two CPUs, production had at least four, if not eight. If staging had 16 gig of memory, production had at least 32, if not 64, etc., across the board for disk and network bandwidth.

Clearly, it was unusual for the same code base to perform so poorly in this environment. It should have easily outperformed the previous environment, rather than the other way around. My first thought was that production must have a lot more activity that blocked the app. Alas, I validated there was not any unusual blocking. Additionally, in staging Friday had placed a load to estimate traffic, but in production this application was not live yet. So, testing involved only a single thread/client connected to the application and the database.

Searching for the Usual Suspects

This was indeed one of the strangest things that could happen, so I went straight to eliminating everything external. My assumption was that something had to be wrong with the application server or the network. I traced the application for a few simple processes, found the stored procedures that covered those processes, and executed them locally in SQL Management Studio.

If the process came back OK, this wasn’t a database issue. If it wasn’t OK, we were in for some strange times indeed. Running the stored procs manually on the local server did nothing to help. I then asked if there could be a hardware issue. This too was a dead end as the server was a Virtual SQL Server. Friday was able to get another SQL Server on a different host in under an hour with the same results.

So, nothing left to do but dig in and start tracing the code all the way through the process. I did this in staging, and while I would have done things differently, there was not anything flawed with the logic that would have kept me from migrating the code myself in a code review. The only red flag I had was the use of synonyms when referencing data in another database locally.

Next, I looked at the DMV’s in production to find the longest waiting task and then looked up the stored procedure that corresponded to that piece of code. Once found, I did a Redgate compare to execution in staging to prod and they were identical. I went through the code and saw the same thing, but when I ran just this part of the code it ran forever and had a different execution plan than staging.

Solving the Mystery

Were there different indexes? Were statistics not up to date, or were the right indexes not being selected, because on all the big datasets it always did a table scan when there should have been up-to-date indexes supporting these queries?

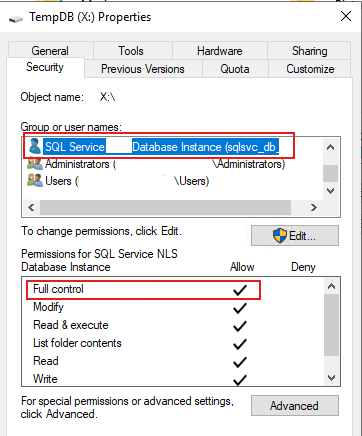

When looking at every table in the query, I finally found the strange issue. In staging the client used the synonym to reference another table in another database, but, in production, the synonym made a linked server call.

There are right ways and wrong ways to leverage the very impressive tool of linked servers in SQL, as documented by our own Randy Knight in “Using Linked Servers the Right Way.” However, I am not sure Friday knew of Mr. Knight’s blog, because they didn’t do it the right way. When you reference data in Server B from Server A, Server A does not know the metadata of Server B. That means it does not know how much data there is coming, what indexes could help with data retrieval, or if the data could fill up the buffer cache and hurt other processing. Therefore, it had to run this data query outside normal, internal, highly optimized routines.

This single dataset that ran in the virtual memory space and then pushed into the buffer cache brought this application to its knees — with just a single user signed in! The solution was a little different than what Mr. Knight showcased but it followed the basic principles in that every time Friday needed data from the external server, they would use the linked server to call a stored procedure remotely on Server B that would return a result set. The proc inserted that result set into a temp table on Server A and then Server A processed the rest of the routine as before.

Surviving the Upside Down…and linked servers in SQL

The moral of this story? Sometimes you find yourself facing the ghost in the machine that warps you directly into the strange realm of impossibility. That’s when you take a step back and start eliminating everything you can by making small, simple processes. Do this and you, too, may survive linked servers in SQL, even when it is turned upside down.